Your survey results say 87% of customers love your new packaging. Six months later, the repeat purchase rate has not moved. Reviews still mention the old packaging. Your CX team is fielding the same complaints.

What happened?

You probably asked a leading question and got the answer you wanted, not the answer that was true. That happens more than most growth teams realize, and it quietly distorts every decision downstream: which products to feature, where to spend ad dollars, what to fix on the PDP, what to put on the homepage.

Survey data is only as good as the survey questions behind it. Bad questions produce confident, useless answers. Good questions produce something rarer and more valuable: the real reason a customer bought, hesitated, returned, or recommended you.

Here is how to spot leading questions in your questionnaire, why they happen, and how to rewrite them so the data collection you do actually moves the business.

What is a leading question?

A leading question is any question that nudges the respondent toward a specific answer. The nudge can be obvious or buried in a single adjective. Either way, the result is the same: you stop measuring what the customer thinks and start measuring how well you wrote the question.

Loaded questions and biased questions usually fall into one of four shapes:

- Loaded language. The question includes a value-laden word that pre-decides the answer.

- Assumption baked in. The question assumes something the respondent has not confirmed.

- Imbalanced scale. The answer options skew positive or negative instead of staying neutral.

- Two questions in one. The question asks two things at once, forcing a single answer to cover both.

A neutral question lets the respondent disagree without friction. A leading question makes disagreement feel like work.

Types of leading questions

Not all leading questions look the same. Understanding the types of leading questions helps you catch them before they make it into a live survey.

Assumption-based leading questions embed a belief the customer has not confirmed. They take it as given that something happened, worked, or was noticed. The respondent answers based on the assumption rather than their real experience.

Coercive leading questions apply subtle pressure toward a particular answer. They frame the question in a particular way that makes disagreement feel socially awkward or contrary. These show up often in customer satisfaction surveys where the brand unconsciously steers customers toward a positive response.

Double-barreled questions ask two things at once and force a single answer to cover both. They are one of the most common mistakes in survey design and one of the easiest to fix once you know what to look for.

Each of these shapes produces the same outcome: leading respondents away from honest answers and toward a desired response. The survey creator ends up with data that reflects their own preconceived notions more than actual customer sentiment.

Why leading questions show up so often in ecommerce surveys

Most leading questions are not written in bad faith. They are written by people who care about the brand and want it to do well. That care is exactly the problem. When you love your product, it is hard to phrase a question that leaves room for someone to dislike it.

The other common cause is internal stakeholder pressure. The product team wants validation for the new launch. The CX team wants proof the new return policy is working. The CMO wants a stat for the next investor update. Each of those needs creates pressure to write a question that delivers the desired answer, and the survey ends up serving the team instead of the customer.

The cost is real. Decisions get made on shaky market research, budgets get pointed at the wrong levers, and the brand keeps wondering why the metrics that should be moving are not. Poor phrasing in a single question can skew an entire data set and send your decision-making in the wrong direction for months.

8 examples of leading questions in a survey (and how to rewrite them)

Here are examples of leading questions in the wild, paired with cleaner versions you can use today.

1. The compliment fishing question

Leading: How much did you love our new checkout experience?

Why it is leading: “Love” pre-supposes a positive emotion. There is no graceful way for someone to say the checkout was frustrating without rejecting the premise of the question.

Cleaner version: How would you describe your checkout experience today?

2. The assumption question

Leading: Which of our premium features helped you the most?

Why it is leading: It assumes the customer used premium features and assumes those features helped. A respondent who never noticed the features has nowhere to go.

Cleaner version: Did any specific features influence your decision to buy? If so, which ones?

3. The negative framing question

Leading: Was anything about our slow shipping frustrating?

Why it is leading: “Slow shipping” is presented as fact. Even customers who thought shipping was fine may now reconsider.

Cleaner version: How would you rate your shipping experience?

4. The double-barreled question

Leading: How satisfied are you with our product quality and customer service?

Why it is leading: Two questions, one answer. A customer who loves the product but had a bad support experience cannot answer honestly.

Cleaner version: Two separate questions. Rate product quality. Then rate customer service.

5. The social proof nudge

Leading: Most of our customers say our skincare changed their routine. What changed for you?

Why it is leading: It signals what other people said before the respondent answers, which biases the response toward agreement.

Cleaner version: Has anything in your routine changed since you started using the product?

6. The imbalanced scale

Leading: How would you rate your experience? Excellent, Very Good, Good, Fair.

Why it is leading: Three positive options and one mildly negative option. The math forces a positive average even when sentiment is mixed.

Cleaner version: Use a balanced 5-point scale with a clear neutral midpoint, or use a 0 to 10 scale like a properly formatted NPS question.

7. The “you agree, right?” question

Leading: Do you agree that fast shipping is important?

Why it is leading: Almost no one disagrees that fast shipping is important. The answer is meaningless because it has no variance.

Cleaner version: What factors mattered most in your decision to buy today? Show a list that includes shipping speed alongside price, reviews, product features, and others.

8. The brand-loving question

Leading: What is your favorite thing about our brand?

Why it is leading: It assumes the respondent has a favorite thing, which assumes positive feelings overall. A neutral or negative customer has to invent something.

Cleaner version: What stood out to you about your experience with us, good or bad?

4 quick rules for writing neutral survey questions

Once you have seen enough leading questions, the fix becomes mechanical. Any good survey creator should run every question through these four checks before it ships. These apply across all question types, from multiple choice to open-ended questions.

- Strip the adjectives. If the question contains “great,” “easy,” “slow,” “frustrating,” or “amazing,” delete the adjective and see if the question still works. It almost always does.

- Check the assumptions. Read the question and ask: what does this question assume the customer already feels, did, or noticed? If the assumption is not safe, rephrase.

- Make disagreement easy. A neutral question lets the respondent say “no,” “neither,” or “I did not notice” without feeling like they pushed back on the brand.

- Ask one thing at a time. If you can split the question into two cleaner questions, do it. One concept per question, every time.

These rules sound obvious until you audit your own active surveys. Most teams find at least one leading question per survey, and the higher-traffic the survey, the more expensive that question gets.

Where leading questions cause the most damage

Not all surveys are equal. A leading question in a one-off feedback prompt is a small problem. A leading question in a post-purchase survey that runs on every order for a year is a structural problem. The data shapes attribution decisions, creative testing, product development, and budget allocation.

A few places to audit first:

Post-purchase attribution questions. If your “How did you hear about us?” question lists your three highest-spend channels first and lumps everything else into “Other,” your reporting will systematically over-credit those three channels. Order matters. Randomize it.

NPS follow-up questions. A clean NPS question on a 0 to 10 scale is one of the most reliable signals in customer research, but the follow-up question is where teams introduce bias. “What did you love most?” assumes love. “What is the primary reason for your score?” does not.

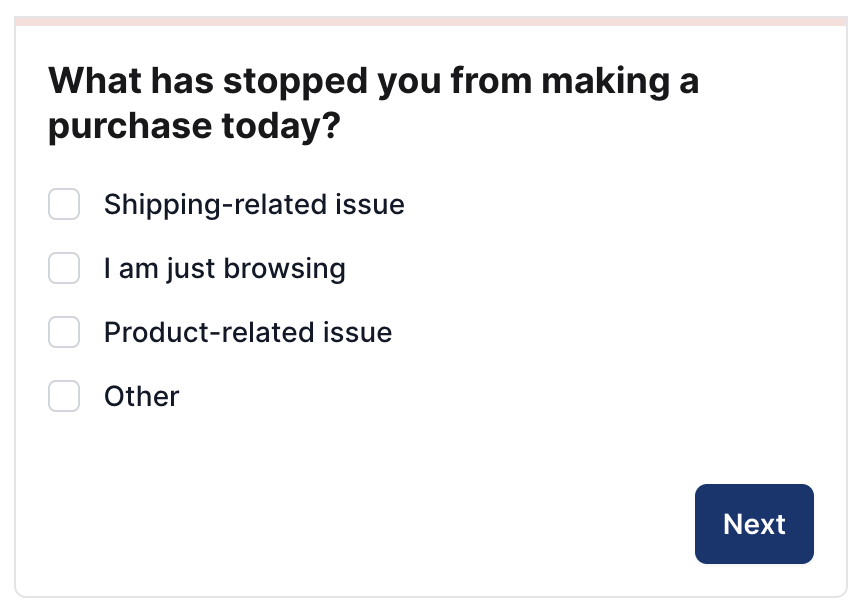

Cart abandonment surveys. Asking “Was the price too high?” leads respondents toward price as the reason, even when the actual cause was a missing payment method or unclear return policy. Open it up with a question that lets the real friction surface: “What stopped you from completing your order today?”

Win-back surveys. “What would bring you back?” assumes the customer wants to come back. “Is there anything that would make you consider buying from us again?” gives them an honest exit.

Real example of avoiding the leading question trap

When Prismfly partnered with CrunchLabs to improve homepage conversion, the post-purchase data they leaned on came from neutrally worded questions. Customers were not asked, “What did you love about CrunchLabs?” They were asked what influenced their purchase, what they were hoping the product would do, and what almost stopped them from buying.

That neutrality is what made the data useful. The team saw recurring themes around trust signals, incentives, and clarity around value, including signals they had not expected to surface. When those themes were translated into homepage messaging tests, conversion rate increased by 4.7%, and revenue increased by 10.2%.

Leading questions would have produced a different result. The team would have heard variations of “I loved everything,” which is pleasant to read and impossible to act on. Clean survey design and neutral phrasing are not just best practices.

They are what separates customer feedback that drives revenue from customer feedback that decorates a dashboard.

The follow-up question is where the real insight lives

Most survey design energy goes into the first question. The follow-up is usually an afterthought, which is unfortunate because the follow-up is where the most valuable data lives.

A clean follow-up sounds like:

- “What is the primary reason for your answer?”

- “Is there anything we could have done differently?”

- “What is one thing you wish we had told you before you bought?”

These questions work because they assume nothing. They do not push toward positive or negative. They give the respondent a small amount of space to say something true, which is often something the team has never heard before.

When you combine clean primary questions with open-ended follow-ups, you stop running surveys that confirm what you already believe. You start running surveys that surprise you, and the surprises are where the next test, the next campaign, and the next product decision live.

Where to go from here

Audit one active survey this week. Look for adjectives, assumptions, double-barreled questions, and imbalanced scales. Rewrite the worst offender. Watch what changes when the next batch of survey responses comes in.

For more examples of questions that actually work, our Post-Purchase Survey Question Bank has 200+ questions broken down by vertical, audience, and purpose. Every question is one survey respondents can answer honestly, which is the only kind of survey data worth collecting.