Most ecommerce brands measuring customer satisfaction start with CSAT. It’s familiar, easy to calculate, and maps cleanly to a percentage. So brands track it, report on it, and assume a good CSAT score above 80% means things are going well. Often they are.

But a CSAT score only captures how someone felt at one moment in time. It tells you nothing about whether they’ll come back.

What is a customer satisfaction score?

We can define CSAT (Customer Satisfaction Score) as one of several key customer satisfaction metrics used to measure how satisfied a customer was with a specific interaction, whether that’s a purchase, a support conversation, or a delivery.

You ask something like: “How satisfied were you with your experience today?” That’s your CSAT question. Customers respond on a 1 to 5 rating scale, with 1 being very dissatisfied and 5 being very satisfied.

How to calculate a CSAT score

Divide positive responses (ratings of 4 or 5) by the total number of responses, then multiply by 100.

CSAT Score = (Number of satisfied customers / Total number of responses) × 100

80 satisfied customers out of 100 total respondents = an 80% CSAT score. Simple enough. The challenge is that simplicity cuts both ways. It’s easy to collect, but easy to misread too.

What is a good CSAT score range?

Most industry benchmarks put a solid CSAT score between 75% and 85%. Here’s what the score ranges generally signal:

- Below 50%: A meaningful portion of your customers are unhappy. Dig into customer feedback immediately.

- 50% to 70%: Average territory. Customers aren’t churning, but they’re not loyal either.

- 70% to 85%: Good. This is where most healthy e-commerce and retail brands land.

- Above 85%: Excellent. You’re outperforming most industry peers.

E-commerce and retail CSAT benchmarks tend to sit around 82%, one of the stronger categories compared to industries like insurance or utilities. The American Customer Satisfaction Index publishes cross-industry data if you want a broader reference point.

But benchmarks are context, not a verdict. A brand at 72% that has improved 8 points over two quarters is doing something right. A brand at 84% that hasn’t moved in a year may be missing something.

The other thing benchmarks don’t tell you is why.

A CSAT score tells you whether customers were satisfied or not. It doesn’t tell you what drove the rating, which part of the customer experience they were responding to, or what would need to change to move the number. That’s where most brands hit the ceiling with CSAT.

Where CSAT falls short for ecommerce brands

CSAT is designed for moments, not relationships. It works well for measuring a specific touchpoint—how a support ticket was handled or whether a delivery arrived on time. Used that way, it’s a useful operational metric for customer support teams.

The problem is when brands use CSAT as a proxy for overall satisfaction. A customer can have a great unboxing experience, rate it 5 out of 5, and still churn because the product didn’t meet customer expectations or a competitor caught their attention.

CSAT captured the moment. It missed the story.

For ecommerce brands, where the value of a customer compounds over repeat purchases, referrals, and word of mouth, a customer satisfaction survey tied to a single customer interaction only tells you so much. You need a metric that reflects customer sentiment about the brand overall, not just the last thing that happened to them.

Other metrics worth knowing: CES

Before getting into NPS, it’s worth knowing where Customer Effort Score (CES) fits. CES measures how easy it was for a customer to complete an action, such as resolving an issue, finding a product, or completing onboarding.

It’s particularly useful for support use cases where friction directly drives customer churn. If you’re trying to optimize self-service flows or reduce support ticket volume, CES can surface friction that a CSAT score won’t catch.

Each of these metrics—CSAT, CES, and NPS—serves a different purpose across the customer journey. Knowing which one to deploy at which touchpoint is how brands turn survey responses into actionable insights.

NPS vs. CSAT: The key difference

NPS (Net Promoter Score) measures customer loyalty, not a single interaction. It asks one question: “How likely are you to recommend us to a friend or colleague?”

Customers respond on a 0 to 10 scale. The way that NPS question is worded and presented affects how customers respond. Survey questions and formatting have more impact on response rates and quality than most brands expect.

The difference sounds subtle, but it changes what you’re actually measuring.

CSAT asks: how did that go?

NPS asks: how do you feel about us?

One is a transaction check. The other is a relationship check. For brands tracking customer retention and repeat purchase rate, that distinction matters a lot.

High NPS scores correlate with happy customers who buy again, refer friends, and stay loyal when a competitor offers a discount. Low scores surface customers at risk before they churn, giving you a window to act. CSAT doesn’t give you that. You can have a clean run of satisfied customer interactions and still be losing customers you didn’t know were on the fence.

BrüMate: NPS as a product development tool

BrüMate didn’t treat NPS as a vanity number. Hans Harris, who oversees growth at BrüMate, breaks down NPS by specific product purchases to identify where the brand needs to improve. Low scores tied to a particular product go directly back into design and engineering. High scores validate which experiences are building real loyalty.

His reasoning goes beyond internal operations, too.

“A lot of brands want to track NPS because investors love to see it as a good indicator of longevity,” Hans explains. “It shows we’re not just making a quick buck at the customer’s expense but are here to stay because our customers love the product and brand, and are going to tell their friends and family about it.”

BrüMate’s full story gets into how that feedback loop works in practice.

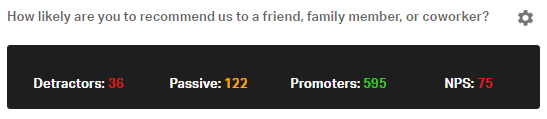

How KnoCommerce calculates NPS

KnoCommerce’s NPS question uses a standard 0 to 10 scale and automatically segments responses into three groups:

- Detractors: Scores of 0 to 6

- Passives: Scores of 7 or 8

- Promoters: Scores of 9 or 10

Each Detractor is assigned a value of -100, each Passive receives 0, and each Promoter receives 100. All scores are averaged to produce the final NPS. A score of 100 means every respondent was a Promoter. A score of -100 means every respondent was a Detractor. Zero means they cancel each other out.

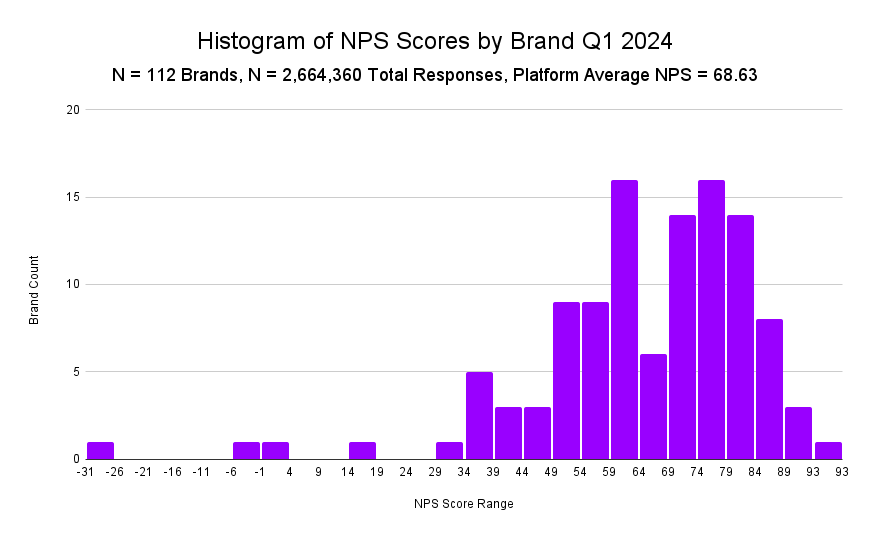

Any score above 0 is a positive sign. Scores above 50 are excellent. Most brands fall somewhere in between. KnoCommerce publishes benchmark data from real brands so you can see how your score compares against others in the space.

Where do most brands land?

Based on KnoCommerce data from Q1 2024, brands running NPS surveys with at least 100 responses showed a wide distribution of scores. Having that distribution available means you’re not measuring yourself against a generic industry average.

You’re seeing where you actually stand relative to other ecommerce brands running the same question in the same context.

What to do with your NPS data?

Collecting NPS is the easy part. Most brands underuse what they get back.

The first thing to do is break your score down by customer segment. A brand-wide NPS of 45 can hide a lot. First-time buyers might be sitting at 25, while repeat customers are at 65. Those are two completely different problems.

The first-time buyer score tells you something is off in the early experience, whether that’s onboarding, product expectations, or post-purchase communication. The repeat customer score tells you the relationship is strong once people stick around. You can’t see that split in a blended number, and you can’t act on it if you can’t see it.

The second thing is tracking movement over time. A snapshot NPS tells you where you are. Monthly or quarterly tracking tells you whether what you’re doing is working.

If you launch a new packaging experience and your NPS moves up 6 points the following month, that’s a signal. If you run a promotion and your score drops because it attracted a lower-intent customer base, that’s a signal too. The trend line is where the learning happens.

The third is following up with Detractors. This is the highest-leverage action most brands skip. Customers who leave a low NPS score and then receive a personal response from the brand, not an automated email but an actual human reply, are far more likely to give the brand another chance than customers who hear nothing. The score identifies them.

The follow-up is what changes the outcome. Knowing what to ask after the NPS score is what turns a number into something you can actually act on.

Satisfaction is a moment. Loyalty is the business.

CSAT gives you a useful read on specific interactions. A score between 75% and 85% is solid, above 85% is excellent, and anything below 70% warrants a closer look.

But satisfaction at a moment and loyalty over time are different things, and for ecommerce brands, loyalty is the number that drives revenue.

NPS captures how customers feel about your brand, not just the last thing you did. Combined with segmentation and a consistent follow-up process, it gives you a feedback system that actually informs decisions rather than just confirming that things are roughly fine.

Your CSAT score is a starting point. KnoCommerce helps you go further. Book a demo here!