Your analytics tell you what customers did. They bought. They came from somewhere. The conversion happened.

What they can’t tell you is why someone bought, what almost stopped them, or how they found you in the first place. A post-sales questionnaire is built to close that gap.

Done right, post-purchase surveys give you zero-party data straight from the source. The kind that sharpens ad targeting, informs product decisions, and gives you more accurate attribution than any pixel can.

Understanding the importance of post-sales questionnaires

Most brands have more data than they know what to do with. What they’re often missing is the layer that explains it. Post-sales questionnaires are where that layer comes from.

Why collect customer feedback after a sale?

The moment after a purchase is one of the few times a customer is fully engaged with your brand. They’ve just made a decision. That makes their feedback more accurate and more honest than almost any other point in the customer journey.

Most analytics tell you what happened. A post-purchase survey tells you why. Why they bought, what almost stopped them, and where they came from. That’s the kind of survey data that shapes ad spend, improves product pages, and builds a clearer picture of who your customers actually are.

The brands that get the most out of post-purchase research treat it as a primary data source, not a backup check when the pixels disagree.

Most analytics tell you what happened. A post-purchase survey tells you why. Why they bought, what almost stopped them, and where they came from. That’s the kind of data that shapes ad spend, improves product pages, and builds a clearer picture of who your customers actually are.

The brands that get the most out of post-purchase research treat it as a primary data source, not a backup check when the pixels disagree.

How customer insights drive business growth

Survey data compounds. Attribution responses tell your paid team which channels are actually working. Barrier questions reveal friction in the checkout experience that your CRO team can fix. Segmentation questions feed Klaviyo flows that make every subsequent email more relevant.

Each answer a customer gives you is a data point that improves a decision somewhere in the business. That’s why the brands running consistent post-purchase research tend to outpace those running on behavioral data alone. They’re making decisions based on what customers said, not just what they did.

Types of post-sales questionnaires

Not every survey serves the same purpose. The questions you ask right after checkout are different from the ones you ask two weeks after delivery, and both are different from a survey designed to understand who your customers are beyond their order history. Knowing which format fits which goal is where most brands can improve.

Most brands treat the post-purchase survey as one thing. In practice, there are a few distinct types, each suited to different goals.

Post-purchase survey

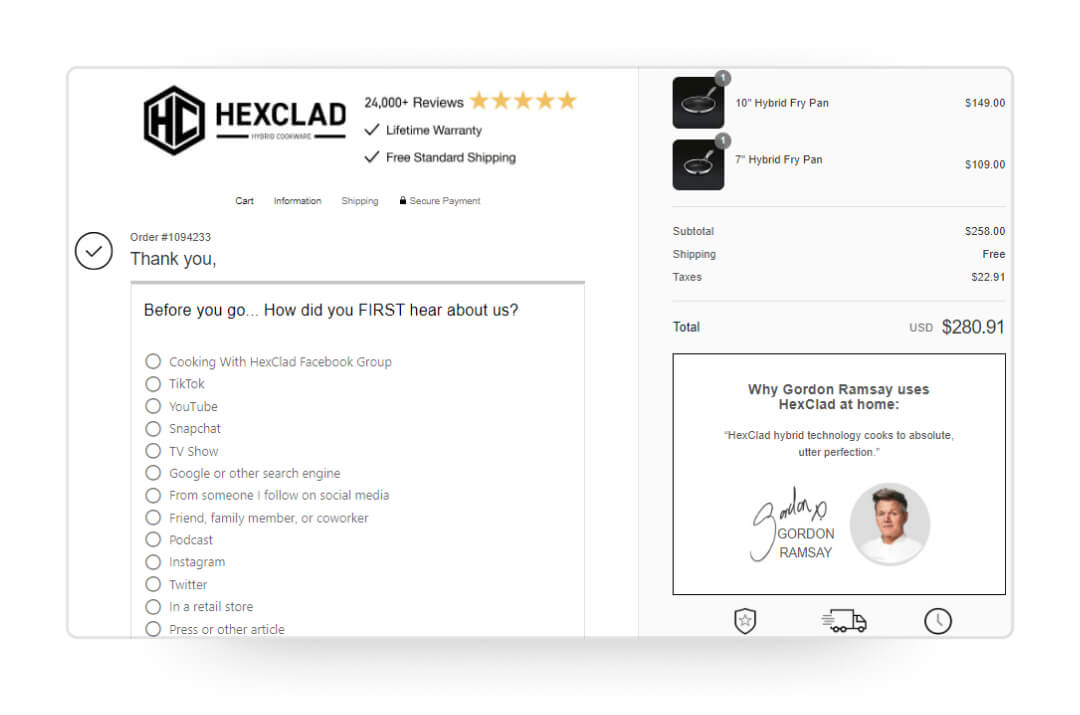

The most common format. Typically triggered on the order confirmation page or sent via email, these surveys are designed to capture attribution data, identify checkout friction, or understand what drove the purchase decision. The confirmation page gets the highest response rates because the customer is still in session.

Product feedback form

This one needs to wait. Asking about product quality the moment someone checks out is pointless because they haven’t received it yet. Product feedback is best collected three to five days after estimated delivery, when the customer has actually used what they bought. Questions here focus on quality, fit, and whether the product matched expectations.

Market research questionnaire

Less about the transaction, more about the customer. These surveys dig into buyer personas, purchase motivations, and brand perception. “Which of these best describes you?” and “What were you looking for that brought you to us today?” are classic examples.

They’re not satisfaction questions. They’re intelligence questions.

Crafting effective post-purchase survey questions

Good survey questions don’t happen by default. The wrong question at the wrong moment produces data that looks useful but isn’t. The right question, asked at the right point in the customer journey, gives you something you can actually act on.

The format matters as much as the content. Multiple-choice questions get higher completion rates than open-ended ones, especially as the first question in a survey. Open-ended questions can follow, but leading with them creates friction.

Here are the core areas to build questions around.

Attribution

“How did you hear about us?” is the foundational question for DTC brands trying to understand which channels are actually driving purchases. iOS privacy changes, cross-device behavior, and increasing channel fragmentation, including the rise of social media as a discovery channel, mean your paid dashboards are telling only part of the story.

Survey-based attribution fills in the gaps. It won’t be perfect, but it gives paid teams directional data to validate spend and shift budget. Across KnoCommerce brands, this question consistently becomes one of the most actionable data points within weeks of going live.

Barriers to purchase

“Was there anything that almost prevented you from buying today?” is one of the most underused questions in post-purchase research. You’re asking someone who just converted what nearly stopped them. The answers reveal checkout process friction, pricing concerns, messaging gaps, and missing trust signals, all from people who already bought.

If a meaningful share of customers mention they almost left because shipping felt unclear or return policies were hard to find, that’s a product page fix. These are exactly the pain points that don’t show up in behavioral metrics but cost you real revenue.

Customer satisfaction

A simple NPS or CSAT question question gives you a benchmark to track over time. More useful than the score itself is the follow-up: “What’s the main reason for your score?” Without the open-ended follow-up, you know sentiment. With it, you know why. Tracking these metrics consistently is what turns a satisfaction survey into a reliable signal for customer loyalty.

Product quality

Ask after delivery. Rate-based questions combined with an open comment field give you both the quantitative benchmark and the specific language customers use to describe their experience. That language is valuable for ad copy, product descriptions, and review responses.

Shopping experience

Was checkout smooth? Was the product information clear? These questions are best asked on the confirmation page while the purchase experience is still fresh.

Friction in the user experience directly affects abandonment, and your customers are the best data source on where that friction lives.

Examples of post-purchase survey questions

Here are specific survey questions organized by goal. If you’re starting from scratch, survey templates can help you build a working question set faster, then adjust from there.

For attribution:

- Where did you first hear about us? (multiple choice, with an “other” option and a follow-up text field)

- What finally convinced you to make your purchase today?

For barriers and conversion:

- Was there anything that almost stopped you from completing this purchase?

- How easy was it to find what you were looking for on our site?

For customer segmentation:

- Which of these best describes you? (build options around your actual buyer personas)

- What’s the primary reason you purchased today?

For product feedback (post-delivery):

- How would you rate the quality of what you received?

- Did the product match what you expected based on our site?

- What’s one thing we could improve?

For satisfaction:

- On a scale of 0 to 10, how likely are you to recommend us to a friend?

- What’s the main reason for your score?

Tailoring the right questions to target audiences

Your survey tool is the same regardless of what you sell. Your questions shouldn’t be. A supplements brand and an apparel brand are talking to completely different customers with completely different motivations, and the questions that work for one will fall flat for the other.

Oats Overnight is a useful example of how far you can take this. Their post-purchase survey runs 20 questions, which breaks the “keep it short” rule most brands follow. But they built it deliberately, and the results back it up: a 58% response rate and a 77% completion rate.

It works because the questions aren’t generic. They ask about purchase motivations, health goals, occupation, household income, and retail shopping habits. A customer who selects “I was looking for a vegan option” gets a completely different Klaviyo flow from that point forward. Testimonials from other vegan customers. Invites to relevant brand communities. Product updates focused on vegan flavors. The survey isn’t just research. It’s the first step in segmentation.

That’s the standard to aim for. Every question should have a clear owner and a clear use case. If you can’t answer “what will we do differently based on the survey responses to this question,” it probably doesn’t belong.

That’s the standard to aim for. Every question should have a clear owner and a clear use case. If you can’t answer “what will we do differently based on the responses to this question,” it probably doesn’t belong.

Analyzing and utilizing feedback

Collecting feedback is the easy part. What you do with them determines whether your survey is a research exercise or an actual business input. The method you use to collect data, the channel you use to reach customers, and the framework you bring to interpreting results all affect what you walk away with.

Data collection techniques

How you collect survey responses shapes the quality of what you get back. The two main formats for post-sales questionnaires are on-site surveys and link-based delivery.

- On-site surveys embed directly in the order confirmation page. The customer is still in-session, engagement is high, and you don’t need to compete with an inbox. This is where attribution and shopping experience questions perform best.

- Link-based delivery sends a survey URL through email, SMS, or a post-purchase flow. It takes the customer off-site but opens up more question types, longer surveys, and the ability to reach customers at a different point in their experience with the product.

Most brands use both, with different questions mapped to each format based on when the data is most useful.

Online surveys

Email delivery works well for product feedback and NPS, where timing matters. Sending two to four days after delivery means you’re getting feedback from customers who have actually used what they bought.

You can automate this using integrations between your survey tool and your email platform, which helps streamline the process without manual work each time. For Shopify brands, post-purchase survey tools that connect directly to your store make this much easier to set up and manage.

Telephone interviews

For brands doing deeper qualitative research, phone interviews get you something surveys can’t: unscripted responses. A customer on a call will tell you things they’d never type into a survey field. The tradeoff is scale. You can interview ten customers in the time it takes to collect a thousand survey responses.

The most effective approach is using survey data to identify who to call. If your post-purchase survey surfaces a consistent theme in open-ended responses, like customers repeatedly mentioning pricing as a concern, a handful of follow-up calls can help you understand what’s driving that. The survey finds the signal. The interview explains it.

Interpreting results for decision making

Raw responses aren’t valuable insights. You need volume and a framework for making sense of what you collect.

For attribution data, look at channel distribution. If 40% of respondents say they heard about you through a friend but your referral program doesn’t exist yet, that’s an opportunity worth sizing. If Meta shows up as the top paid channel in your survey data but your ROAS looks weak, dig into your creative and audience mix before you cut spend.

Brands syncing Kno with Klaviyo can build segments off survey responses directly. The data isn’t just sitting in dashboards. It’s triggering flows. That’s the functionality that turns collecting feedback into a real input for marketing efforts, not just a reporting exercise.

For open-ended responses, look for recurring language rather than one-off comments. If five customers in the same week independently mention they weren’t sure about sizing, that’s not anecdotal. It’s a product page gap.

Segment by customer type when you can. New customers and repeat customers have different motivations. Treating their survey responses as the same population will hide useful patterns. Real-time data access, if your platform supports it, helps you catch these patterns early rather than in a quarterly review.

Best practices for implementing post-sales questionnaires

A well-designed question set means nothing if the survey reaches the wrong person at the wrong time with the wrong incentive structure. These are the operational decisions that separate surveys that generate noise from ones that generate signal.

Timing and method of delivery

The confirmation page gets the highest response rates for attribution and shopping experience questions. Email follow-ups sent two to four days after delivery work better for product feedback. SMS has high open rates but limited space, so reserve it for single-question surveys.

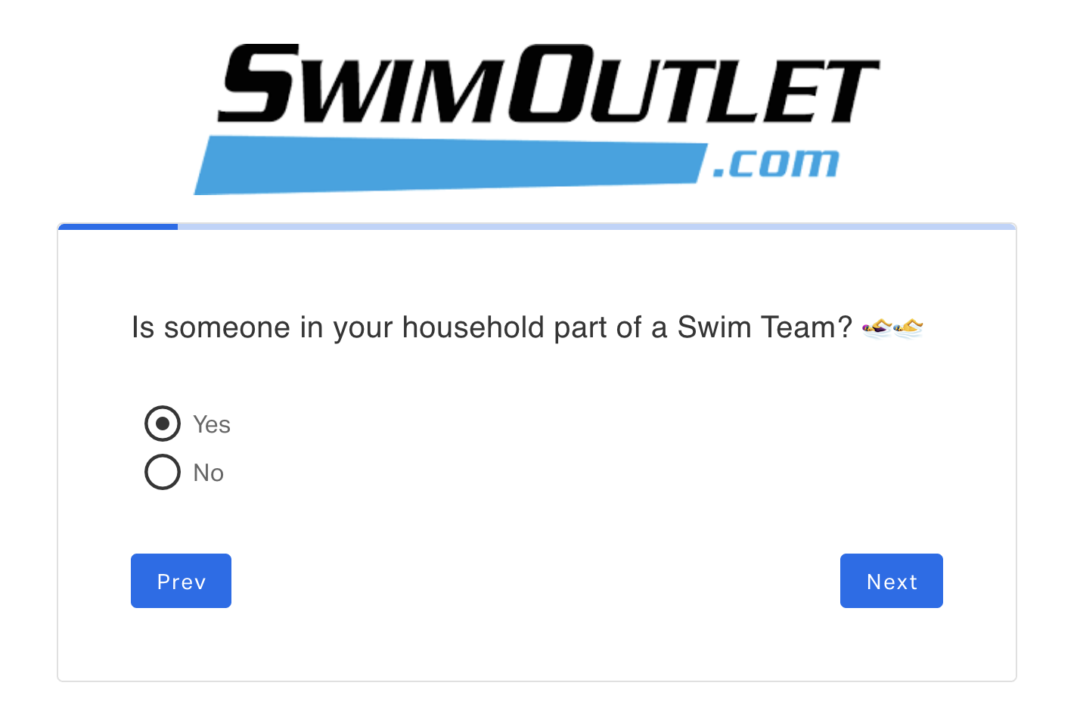

One common mistake: sending the same survey to every customer regardless of order history. A customer on their sixth purchase has a very different relationship with your brand than someone who just bought for the first time.

SwimOutlet’s post-purchase survey results are a good example of what you find when you segment by new versus returning buyers. They assumed 80% of their customers were competitive swimmers. Surveys revealed only 51% were, which changed how they positioned the brand entirely.

Incentivizing participation

Discount codes and loyalty points improve completion rates, especially for longer surveys. The tradeoff is that incentives can bring in responses from customers motivated by the offer rather than genuine feedback.

For attribution and NPS questions, the stakes are low enough that it’s not a major concern. For qualitative product feedback, keep the incentive modest to ensure you still get honest responses.

Continuously updating your questionnaire

Customer behavior changes. New products, new channels, and shifts in competitive positioning all change what questions are most useful. A survey set up in 2023 and never touched since is likely missing questions that would help you understand what’s driving your business today.

Review your question set quarterly. Add questions when you launch new products or test new channels. Retire questions when they’ve generated enough data to make a decision. Think of this as a chance to optimize the survey over time, not a one-time setup.

Building a post-sales questionnaire that earns real data

The brands getting the most from post-purchase research share a few things. They ask fewer questions. They rotate based on what decision they’re trying to make. They treat survey data as a primary source.

A well-designed post-sales questionnaire is one of the few places where you’re getting customer data directly from respondents, in their own words, at the moment they’re most engaged. Most brands underinvest in it. The ones that don’t end up with a clearer picture of where buyers come from, what nearly drove them away, and what keeps them coming back.

KnoCommerce is built for exactly this. Post-purchase surveys designed for ecommerce brands, with attribution at the core and an average survey response rate above 45% across the platform.

If you’re curious on where to start, we have a question bank that includes 200+ survey question examples broken down by vertical so you can filter to what’s actually relevant to your brand.